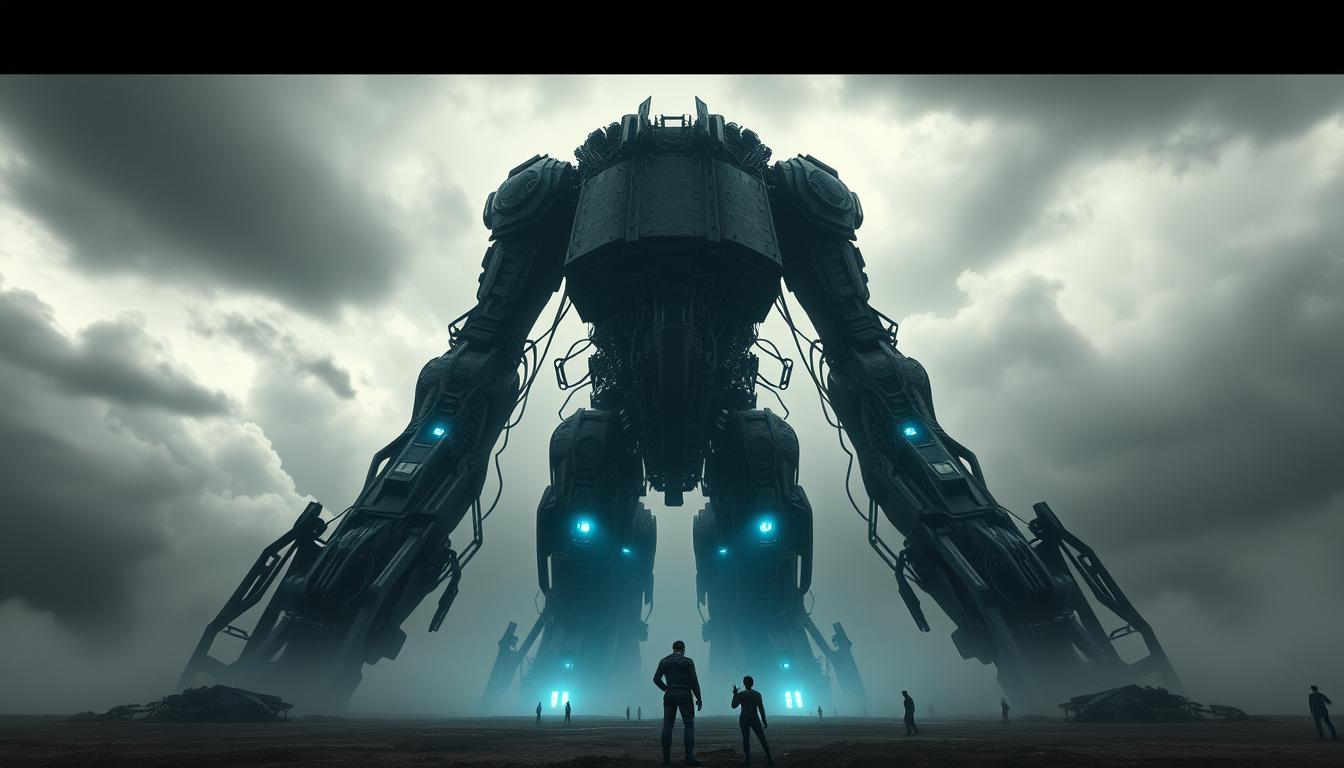

Did you know that over 50% of jobs in the United States could be automated by artificial intelligence within the next few decades? While AI offers unprecedented opportunities, it also brings significant AI existential risk and AI safety concerns that cannot be overlooked.

The integration of artificial intelligence into various aspects of society raises questions about its potential implications and the existential risks it may pose. From job displacement to ethical dilemmas and security threats, the range of risks associated with AI is vast and complex.

In this article, we will explore the multifaceted risks of artificial intelligence. We will examine how these challenges could affect our future and what measures can be taken to mitigate them. Understanding these risks is crucial, as it provides the foundation for informed discussions on AI safety concerns and prepares us for the evolving technological landscape.

Key Takeaways

- Over 50% of jobs in the US could be automated by AI in the near future.

- AI integration brings substantial AI existential risks and safety concerns.

- Potential implications range from job displacement to ethical dilemmas and security threats.

- Understanding AI risks is critical for informed discussions and future preparations.

- This article will explore various aspects of AI risks and mitigation strategies.

Understanding the Basics of Artificial Intelligence

Artificial Intelligence (AI) is a transformative technology that is revolutionizing various sectors globally. It’s essential to grasp what AI entails, its types, and its significance in today’s world before exploring its ethical complexities.

Definition and Overview

AI simulates human intelligence processes through machines, notably computer systems. These processes include learning, reasoning, problem-solving, perception, and language understanding. Over the years, AI development has advanced significantly, becoming crucial in applications from chatbots to autonomous vehicles.

Types of Artificial Intelligence

There are predominantly two types of AI:

- Narrow or Weak AI: Designed for a specific task, such as facial recognition or internet searches. These systems operate within a limited, pre-defined range.

- General or Strong AI: Capable of performing any intellectual task a human can. This type remains largely theoretical but is the ultimate goal of AI ethics and AI development research.

Importance in Modern Society

AI is pivotal in modern society, impacting industries like healthcare, finance, transportation, and entertainment. It automates routine tasks, provides insights through data analysis, and enables innovations previously deemed impossible. This broad influence highlights the necessity for robust AI ethics and responsible AI development to ensure these advancements benefit humanity.

Potential Risks of AI Integration

Artificial intelligence’s progression into various sectors brings forth substantial challenges. Job displacement through automation and the economic hurdles of AI deployment are critical concerns. Privacy and data security risks escalate as AI systems advance in complexity.

Job Displacement and Economic Impact

The swift evolution of AI technology is set to revolutionize industries by automating tasks previously handled by humans. This innovation boosts efficiency but also threatens job security. Workers in sectors like manufacturing, retail, and transportation are at risk of being replaced by intelligent machines.

This transformation could intensify the economic challenges of AI, widening the gap between those skilled to work alongside AI and those who are not. Thus, economic inequality may increase due to AI integration.

Privacy Concerns and Data Security

The increasing use of AI leads to the collection and processing of vast amounts of data, raising significant privacy concerns. AI systems often need access to sensitive information, posing substantial data security risks. Unauthorized access and data breaches could result in severe ethical and legal repercussions.

The AI control problem complicates data integrity by introducing challenges in managing and overseeing AI behavior. Ensuring AI systems comply with privacy standards and protecting against potential data misuse is a critical challenge for organizations and regulators.

Ethical Dilemmas in AI Development

As AI evolves, the need to address ethical dilemmas intensifies. It is crucial to understand how these technologies affect human rights and justice. The central issue revolves around the AI value learning problem, which questions how AI systems align with human values.

Bias and Discrimination

Artificial Intelligence systems have exhibited bias, leading to discrimination. This bias often stems from the data they are trained on, which may reflect societal prejudices. AI ethical issues emerge when these biased outcomes affect decision-making in critical areas such as hiring, law enforcement, and finance.

Accountability and Responsibility

Accountability is another critical aspect. In cases of AI malfunction or unethical behavior, determining responsibility is complex. AI ethical issues underscore that developers, companies, and users all bear some responsibility. Grasping the AI value learning problem and implementing effective frameworks is essential for overcoming these challenges.

Security Threats Linked to AI

Artificial intelligence’s advancement complicates the security threat landscape. It’s essential to recognize the AI risks associated with its expansion, notably in cybersecurity and military spheres. Grasping these dangers is vital for achieving thorough AI safety.

Cybersecurity Risks

The AI risks include its potential to enhance cyberattacks. AI technologies can develop advanced hacking tools that evade conventional security systems. These tools might automate attacks, escalate their scope, and exploit vulnerabilities with unmatched velocity. Thus, it’s critical to implement strong defenses to safeguard critical data and uphold AI safety in digital realms.

Autonomous Weapons

The emergence of autonomous weapons poses a substantial AI risk. These AI-driven military systems can execute decisions autonomously, sparking ethical and safety debates. Their deployment in conflicts could significantly jeopardize global AI safety. Thus, the imperative for strict regulations and global agreements to oversee such technology’s use is clear, aiming for a secure future.

Lack of Regulation and Governance

As AI permeates various sectors, the dearth of structured AI governance poses substantial hurdles. The technology’s rapid advancement outpaces regulatory bodies, leading to a disjointed legislative framework.

Current Legislative Framework

Currently, AI legislation is scattered and inconsistent across different regions. In the United States, individual states have implemented their own regulations, creating a void in comprehensive, federal policies. This disparity complicates compliance and enforcement for companies and developers.

An article from the Brookings Institute details “The Three Challenges of AI Regulation,” emphasizing the need for uniform standards [AI legislative challenges].

Challenges in Policy Formation

AI legislative challenges encompass various aspects, making policy creation extremely challenging. Policymakers must navigate the delicate balance between innovation and ethics. They also need to ensure regulations are both adaptable and precise. The global scope of AI development further complicates the legislative process.

Lawmakers often lack the technical expertise to craft effective policies. This is exacerbated by the technology’s rapid evolution, which frequently renders regulations obsolete or irrelevant.

In conclusion, the current state of AI regulation highlights the critical need for effective AI governance. Policymakers must efficiently address these AI legislative challenges to ensure both innovation and ethical standards are upheld.

Dependence on AI Systems

Advancements in technology have heightened our reliance on artificial intelligence (AI) across various sectors. This growing dependency introduces substantial challenges and implications for decision-making and workforce skills.

Impact on Decision-Making

The integration of AI into critical decision-making processes poses numerous risks. A significant concern is the potential for humans to over-rely on AI recommendations. This diminishes their own decision-making abilities. By heavily relying on AI analysis, nuanced human judgment and ethical considerations might be overlooked.

This oversight could lead to decisions that are technically sound but morally or socially questionable. The human-AI relationship becomes critical in addressing these risks.

Skills Degradation in Human Workforce

Heavy AI usage also poses a threat to the skills of the human workforce. As machines assume more responsibilities, there is a risk that essential human skills may erode. Tasks requiring critical thinking, problem-solving, and creative ideation could decline as AI systems handle these functions.

This shift not only affects individual professionals but also impacts the broader economic landscape. It creates a disparity between AI capabilities and human aptitudes. Understanding the human-AI relationship is essential in mitigating these risks.

By finding a balance between leveraging AI for efficiency and preserving human expertise, we can navigate the complexities of AI integration. This approach ensures that essential human skills and decision-making capabilities are not compromised.

Unintended Consequences of AI

Artificial intelligence has profoundly impacted modern society, yet its rapid evolution has introduced unforeseen challenges. The spread of misinformation and manipulation, coupled with the unpredictable nature of machine learning systems, are primary concerns. These issues underscore the need for a more nuanced understanding of AI’s role in our lives.

Misinformation and Manipulation

The integration of AI in media and communication has amplified the dissemination of false information. Machine learning algorithms, designed to optimize content, can inadvertently prioritize misleading content. This exacerbates the problem, leading to widespread manipulation. Individuals and entities exploit these tools, compromising public trust and understanding.

Unpredictable Behavior in Machine Learning

The AI unpredictability is a critical concern in the field of machine learning risks. Complex AI systems often exhibit behaviors that are not fully comprehensible or predictable, even by their creators. This unpredictability can lead to a range of negative outcomes, from minor inefficiencies to critical failures. As AI becomes more prevalent in various industries, addressing these risks is crucial for maintaining stability and safety.

Recognizing and mitigating these unintended consequences is vital for the responsible advancement and application of AI technologies. By adopting a vigilant approach, we can maximize AI’s benefits while minimizing its risks.

AI and Human Interaction

The advent of AI has significantly altered human interaction, reshaping social skills and communication patterns. AI technologies, now ubiquitous, exert profound effects on our social interactions. This transformation is a direct result of the widespread adoption of AI in our daily lives.

Impacts on Social Skills

AI-driven tools, such as chatbots and virtual assistants, are becoming integral to our daily routines. These innovations streamline tasks and enhance information access. Yet, their increasing presence might erode our ability to engage in face-to-face interactions. The over-reliance on AI could diminish our capacity to interpret social cues and engage in deep conversations. Understanding these dynamics is crucial as society adapts to an AI-enhanced world.

Changes in Communication Patterns

The rise of AI has led to a significant shift towards digital communication and automated responses. Traditional personal interactions are now often supplemented or replaced by AI interfaces. This shift alters the essence of conversations, making them more efficient but potentially less personal. The integration of AI into communication is a trend that continues to evolve, reshaping how we interact with one another.

The Role of Transparency in AI

In the rapidly evolving landscape of artificial intelligence, maintaining AI transparency is crucial for fostering user trust. Transparency ensures that users can comprehend how AI systems make decisions. This leads to more informed interactions and mitigates potential misunderstandings or misuse of technology.

Importance of Explainable AI

Explainable AI is pivotal in enhancing AI transparency. By providing clear, comprehensible explanations of AI processes and decisions, users are equipped with the knowledge necessary to understand, trust, and effectively use AI systems. This not only leads to greater trust in AI but also encourages the responsible use of technology.

Building Trust with Users

To build a lasting and trust-filled relationship between humans and AI, it is essential to adopt best practices that ensure AI transparency. This involves making algorithms understandable and accessible, thus fostering a sense of trustworthiness. Regular updates and open communication about AI capabilities and limitations can significantly enhance user trust in AI systems.

By prioritizing transparency in AI, developers and businesses can create more reliable, ethical, and user-friendly AI technologies. This ultimately benefits society at large.

The Future Landscape of AI Risks

The progression of Artificial Intelligence is transforming our world, presenting both opportunities and challenges. As AI technologies evolve, it is crucial to address the AI existential risk and the futuristic AI challenges that may arise.

Emerging Technologies and New Threats

Emerging AI technologies, such as advanced machine learning, quantum computing, and autonomous systems, introduce unique risks. These advancements could lead to new cyber threats, autonomous weaponry, and privacy concerns. The complexity and interconnectedness of these technologies demand a proactive approach to identifying and mitigating potential risks. Our capacity to foresee and counter these dangers will be pivotal in securing a safe and secure future.

Preparing for Future Challenges

Preparing for the future of AI involves sustained investment in research and development, alongside robust ethical and regulatory frameworks. Governments, organizations, and individuals must collaborate to foster an environment where AI can flourish safely. This collective effort will ensure we are equipped to handle any AI existential risk that may emerge, striking a balance between innovation and caution.

Mitigation Strategies for AI Risks

The increasing integration of artificial intelligence (AI) necessitates the adoption of effective mitigation strategies to mitigate potential risks. Implementing best practices for developers and raising public awareness of AI risks are crucial. These actions enhance AI safety measures, ensuring more secure and ethical advancements in the field.

Best Practices for Developers

AI developers are instrumental in ensuring the safety and reliability of AI systems. They must implement transparent algorithmic designs and conduct thorough bias audits to prevent discrimination. Regular security assessments are vital for safeguarding data integrity and privacy. From the outset, integrating robust ethical guidelines ensures AI applications align with societal values and legal standards. This fosters trust and accountability.

Educating the Public on AI Risks

Public awareness of AI risks is essential for a society capable of navigating AI complexities. Educational initiatives should demystify AI technologies and elucidate their potential impacts on daily life and personal privacy. Encouraging informed discussions about AI’s benefits and drawbacks cultivates a public that is not only aware but actively engaged in shaping regulations. By promoting AI safety measures and emphasizing transparent communication, we can collectively minimize AI risks.